We often have customers ask about our data science processes and, specifically, how we utilize AI, predictive modeling, and statistical analysis in our work. Below, BlueConduit’s VP of Data Science, Alice Berners-Lee, discusses the BlueConduit data science process in her own words.

When we first receive a new customer’s data, our Data Science team, using expert processes and the best technology tools available, always begins with exploratory data analysis:

Alice: ‘Exploratory data analysis’ is basically the things that have to happen when you familiarize yourself with a dataset and you are just exploring. What’s missing from the dataset? What distributions are present? How do different identifiers within the data relate to each other? All of that is analysis and you can break that down into summaries or insights for people. That’s all statistical analysis and it has nothing to do with modeling.

After initial data analysis, the team moves into building machine learning models:

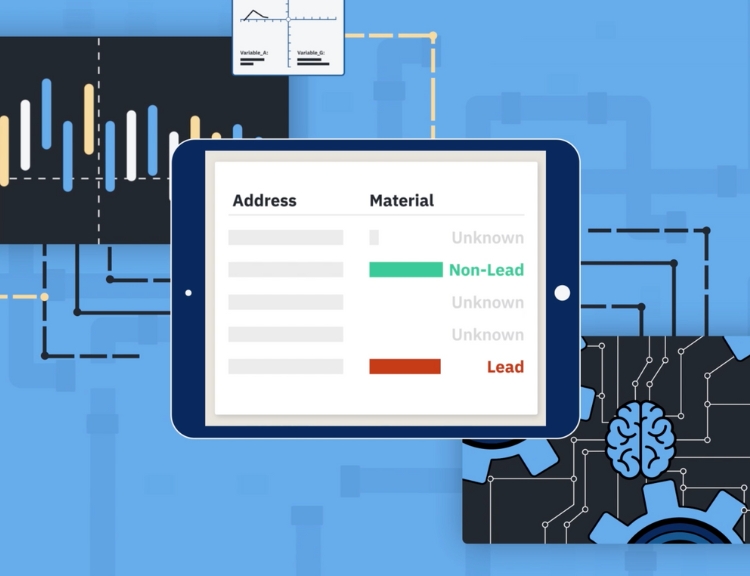

Alice: At BlueConduit, we build machine learning models. That’s our comfiest way of describing what we do, is machine learning models. These are models that do this optimization, which is usually minimizing a loss function, to try to best separate 2 groups within multidimensional space. So you have tons of different features about a city – for example, the year houses were built, demographics, whether the house has an addition, like whether the house has a basement, just all of these random features – and that creates this multidimensional space that is impossible to visualize with human brains. And then within that space there lies these labels of lead/GRR and non-lead/GRR. The model is then trying to cut through this multidimensional space and separate those 2 groups in the most robust way. And so the loss function is trying to estimate the accuracy of that fit and find the best way to discriminate between these 2 labels.

Beyond data science methods, BlueConduit uses additional methods to solve Engineering problems:

Alice: For the data science, we use machine learning for prediction. We use methods from statistics and optimization to make recommendations too. But for the engineering that we do, we are open to use anything. Because when we are doing science, we care about things being correct and we care about how things got correct. We are about interpretability and explainability since ultimately we want to clearly explain to customers and regulators what we’ve done to get these predictions. But to solve an engineering problem, which sometimes happens in an earlier step before you can solve a scientific problem, we throw everything at it. Like getting information from tap cards, for example. Or collecting information from many websites and other unstructured data sources. We use whatever we can to get that information out. Then we check it for quality control and then we do all of that exploratory data analysis to see whether it is useful information, how it relates to other features, and if it is useful then we put that information into a machine learning model.

Ready to explore opportunities for BlueConduit to help guide decision-making with predictive modeling? Schedule a consultation today.