Before you can feel confident in the results of a predictive model or any statistical analysis, you first need to know whether the data being used to make predictions is an accurate representation of the community that your water system community is serving.

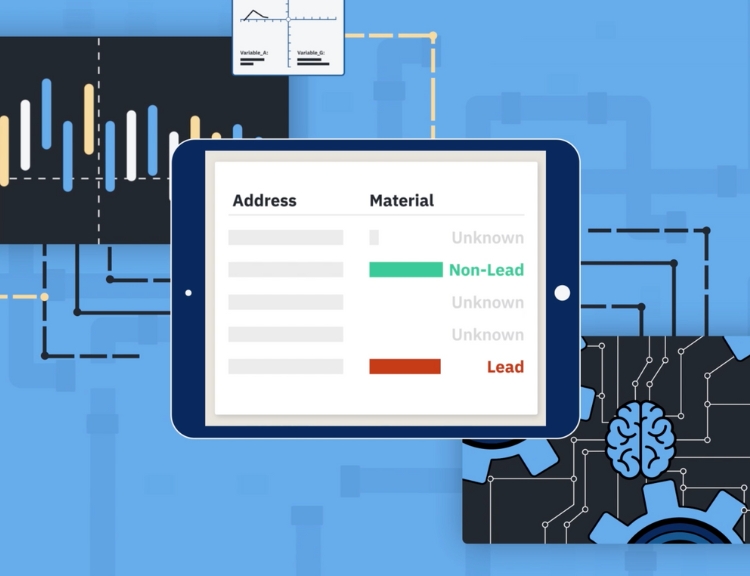

When customers come to BlueConduit for predictions about the likelihood of lead at each address, our Data Science team, using BlueConduit’s predictive modeling technology, assesses their dataset and generates an inspections list. The purpose of the initial inspection list is to ensure the team has a representative sample of locations where our customer will physically verify the service line material. A truly representative sample reflects the complete listing of all locations with unknown service line material. For example, if there are 35% of homes built between 1950 and 1969 in the water system, then 35% of the homes in the representative sample are built between 1950 and 1969. And this should be true, on average, for any measure you can think of. This representative sample data is needed to drive high quality lead likelihood predictions for the remaining unknowns.

A representative sample is key here. If you rely on a sample that is not representative, the resulting predictions may reflect difficult-to-detect biases. In other words, as our Data Science team says regularly, “Garbage in, garbage out.”

The question is: how should we obtain that representative sample?

There are two approaches here: uniform random sampling and stratified random sampling. The names are tricky since each one involves one piece of the other.

Uniform Random Sampling

First, we sample. Uniformly random means that every service line with unknown materials has an equal chance of being included in the sample. With an appropriate number of service lines included, on average, this approach creates an unbiased sample. Then, after the random sample is generated, we can check and adjust for any possible biases we are concerned about. To do that representativeness check, we look at a set of bins, based on stratifying in some way (e.g., year built decade).

Stratified Random Sampling

First, we decide which particular dimensions we are especially concerned with and we create different bins, or strata (e.g., year built decade). Then we select a uniform random sample from within each of these strata. With an appropriate number of service lines included within and between the strata, this creates an unbiased sample, on average.

So, which approach is better? In ideal conditions, on average, they are the same! But conditions are usually not ideal.

Bottom line, both approaches are able to generate a representative sample to drive high quality predictive modeling. That said, at BlueConduit, we prefer to start with uniform random samples across a customer’s full set of not-yet-verified service lines(unless stratified random sampling is required by a state regulator, in which case we align our process with state guidance, e.g., as we have done in California).

While stratified random sampling does ensure representation from each one of the strata, it comes with risks, particularly related to bias and effort, when the dataset is stratified prior to generating a list of sites for physical inspections:

1. Difficulty in Identifying Strata

Identifying appropriate strata and ensuring that each subgroup is distinct and homogeneous can be challenging. If the strata are not well-defined or may be related to another lurking variable you have not stratified on, the benefits of stratification may be compromised. For example, if you stratify by home age but this stratification leads to more valuable homes being over-represented in the verified sample, the resulting model and predictions will also reflect that bias.

2. More Effort Up-front

By splitting a dataset into multiple layers, you are likely increasing the overall number of inspections (sample size) needed across the full dataset. For lead service lines, visual inspections are time-consuming and costly, which can be a limiting factor for communities. Sometimes that initial complexity can turn off local leaders from implementing any random sampling at all.

3. Potential for Sampling Bias

As highlighted above, if the initial strata or subgroups are not identified correctly or make assumptions about the dataset that are incorrect, there is a risk of introducing sampling bias, leading to inaccurate results. Learn how to identify bias in your LSL inventories.

As you can see, up-front stratification can generate a representative sample but creates some risk of bias, requires more upfront effort, and will likely require more on-the-ground inspections than may be necessary. The BlueConduit approach of starting with a uniform random sample, combined with our technology tools and expert Data Science team that checks the sampling is representative, ensures our customers get the best possible predictive model results using their limited resources as efficiently as possible, avoiding excessive inspections.

Ready to learn more about BlueConduit’s predictive modeling technology and tools? Get in touch!